10 Best MLOps Tools for Machine Learning Teams (2026)

The MLOps tooling landscape has exploded. From experiment trackers to full lifecycle platforms, every team faces the same question: which tools are actually worth using in production? This guide cuts through the noise with honest, hands-on analysis of the 10 most important MLOps tools — including one that’s shutting down.

The 10 Best MLOps Tools (Ranked)

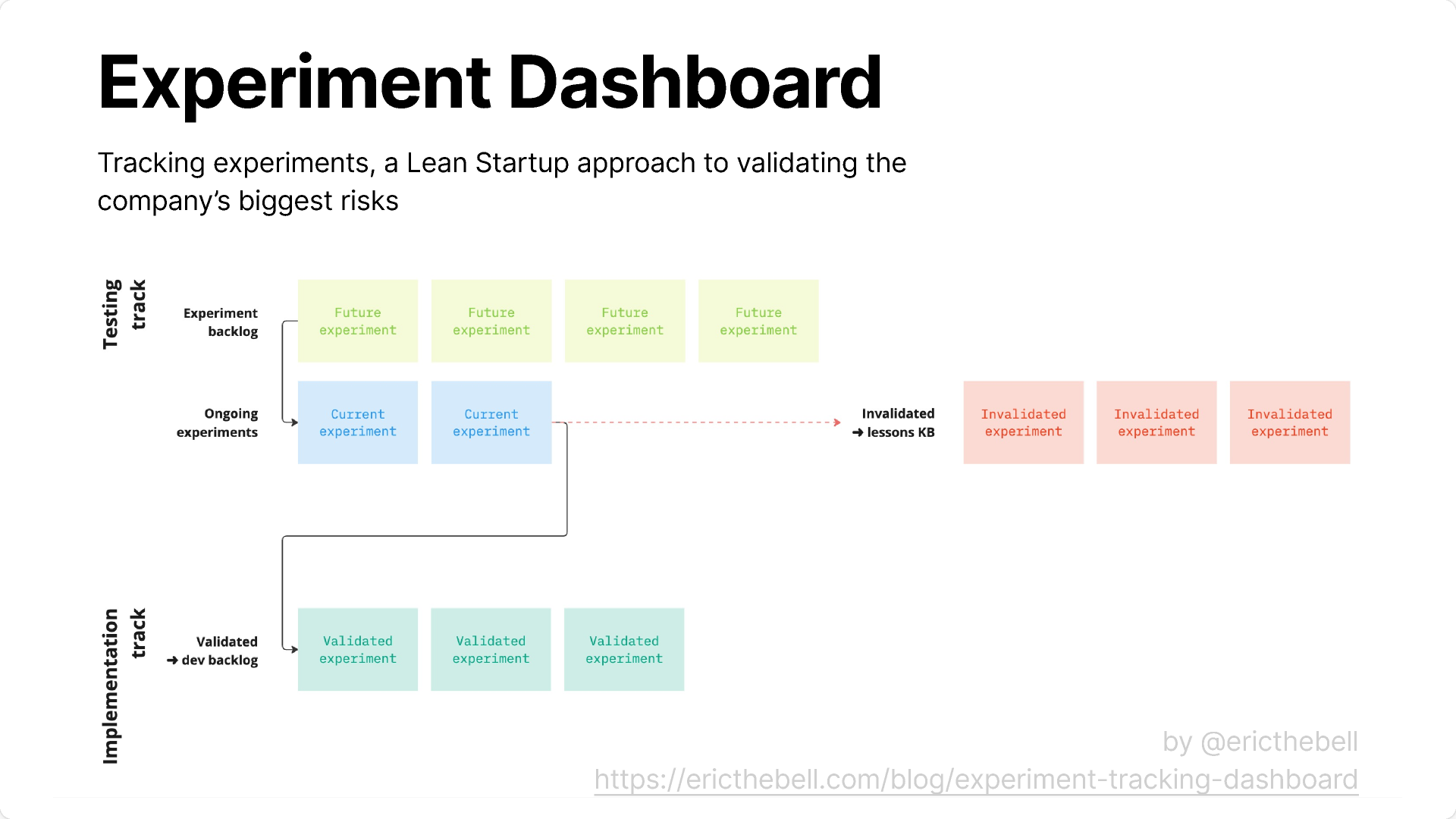

Weights & Biases remains the gold standard for experiment tracking in 2026. Its real-time dashboards, collaborative workspaces, and sweeps are best-in-class. The UI is genuinely beautiful — teams love demoing runs to stakeholders. The main friction point is cost. At $50+/user/month for teams, W&B becomes expensive fast. It also doesn’t do pipelines, data versioning, or model serving — you’ll need other tools alongside it.

Best for: Teams that prioritize UI polish, collaboration, and research workflows.

💰 Pricing: Free tier available | Team: $50+/user/month

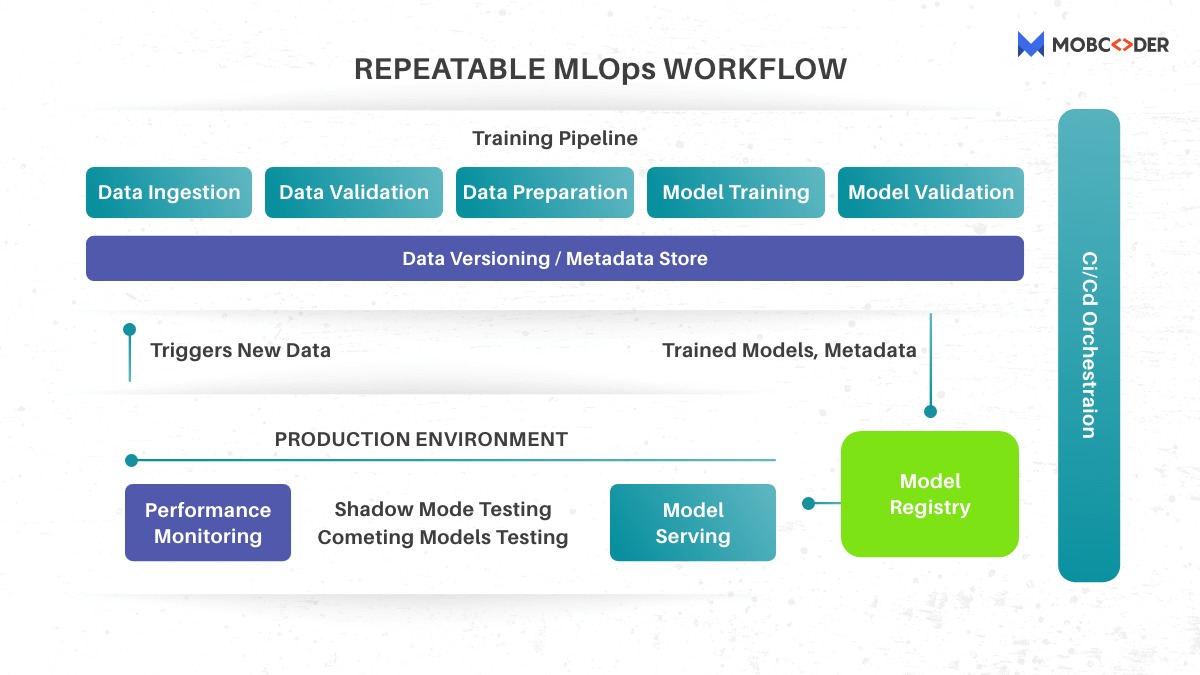

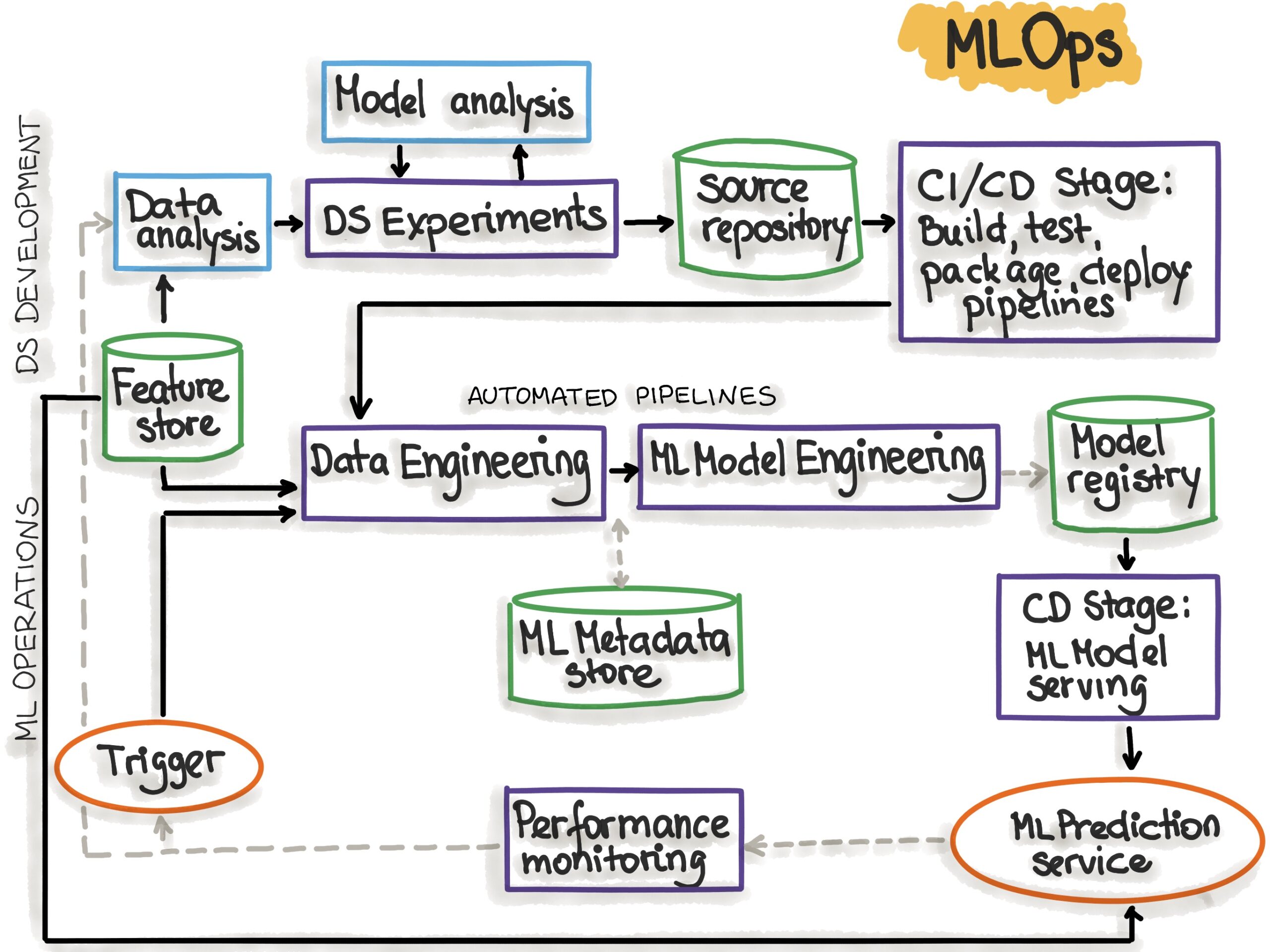

MLflow is the de facto open source experiment tracking standard. It’s embedded in Databricks, Azure ML, and AWS SageMaker — which means if you’re on any major cloud ML platform, you’re probably already using MLflow under the hood. The tracking server, model registry, and project format are battle-tested across thousands of production deployments. What MLflow is not: a full MLOps platform. It doesn’t orchestrate pipelines natively, doesn’t do data versioning, and the serving component is basic. But for teams that need reliable, portable experiment tracking without vendor lock-in, MLflow is the safest choice in 2026.

Best for: Teams on cloud ML platforms or anyone wanting free, portable tracking with no vendor dependency.

💰 Pricing: Free (open source)

ClearML (formerly Allegro Trains) is the most complete open source MLOps platform available in 2026. It handles experiment tracking, pipeline automation, data versioning, model serving, and hyperparameter optimization — all inside one interface. Auto-logging captures everything without manual instrumentation. The Pro tier at $15/user/month makes it dramatically cheaper than W&B while offering more features. Self-hosting gives you complete data sovereignty — critical for regulated industries. The trade-off is setup complexity.

Best for: Teams wanting a full MLOps stack without vendor lock-in or high per-seat costs.

💰 Pricing: Free tier | Pro: $15/user/month | Enterprise: Custom

Comet ML has carved out a strong niche in production model monitoring and LLM evaluation — an area that’s exploded in importance since 2023. Its Opik platform (for LLM evaluation and tracing) is genuinely ahead of most competitors. The experiment tracking is solid, and the production monitoring dashboards surface drift and degradation in ways that W&B and MLflow don’t cover well. Pricing sits between MLflow (free) and W&B ($50+), making it a reasonable middle ground.

Best for: Teams deploying LLMs or models to production who need strong monitoring, drift detection, and evaluation pipelines.

💰 Pricing: Free tier available | Custom pricing for teams

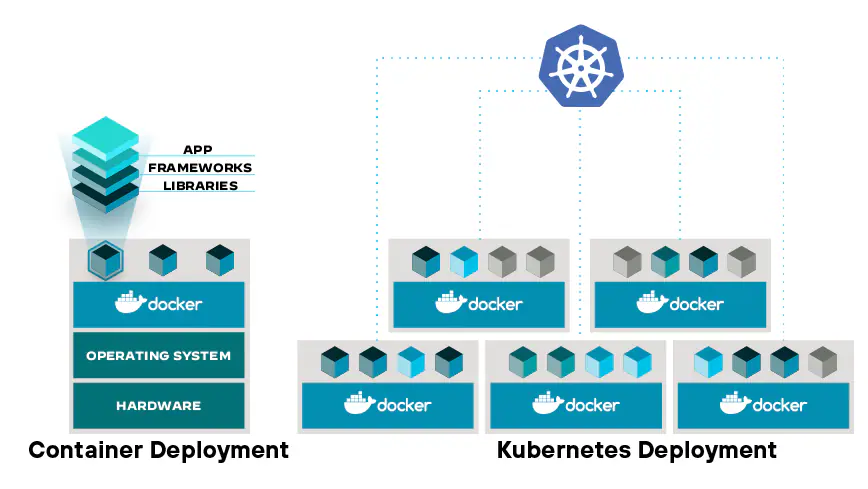

Kubeflow is the Kubernetes-native ML platform from Google. If your infrastructure already runs on Kubernetes — and your team has the cluster management expertise to go with it — Kubeflow provides powerful pipeline orchestration, distributed training, and hyperparameter tuning. Kubeflow Pipelines compiles Python functions into reproducible, containerized pipeline DAGs. The barrier to entry is steep. Teams without strong Kubernetes experience will spend more time debugging infrastructure than running experiments.

Best for: Enterprise ML teams with dedicated MLOps engineers and existing Kubernetes infrastructure at scale.

💰 Pricing: Free (open source) | Managed offerings have separate pricing

DVC is the open source answer to “how do we version large datasets and models without breaking Git?” It treats data and model artifacts like Git treats code — with commits, branches, and remote storage backends (S3, GCS, Azure, SSH). The pipeline definition syntax creates reproducible, cache-aware ML pipelines that only rerun when upstream data or code changes. DVC doesn’t try to be a full MLOps platform — it focuses on doing data versioning and pipeline reproducibility extremely well.

Best for: Any team working with large datasets or needing reproducible data pipelines. Use alongside an experiment tracker, not instead of one.

💰 Pricing: Free (open source)

ZenML takes a framework-first approach to MLOps: you write standard Python, decorate functions with @step and @pipeline, and ZenML handles the orchestration layer, artifact storage, and integration with whichever backend you prefer (Airflow, Kubeflow, Vertex AI, or ZenML’s own server). It’s infrastructure-agnostic by design, which makes it unusually portable. ZenML shines for teams that need pipeline portability — the ability to run the same pipeline locally and on production without rewriting logic.

Best for: Teams that want infrastructure-agnostic ML pipelines and don’t want to be locked into a single orchestrator.

💰 Pricing: Free tier | Pro: Custom | Enterprise: Custom

Metaflow was open-sourced by Netflix and has matured into a serious option for data-scientist-friendly ML infrastructure. Its core insight is that data scientists shouldn’t have to learn DevOps to run reproducible, scalable workflows. Metaflow handles versioning of data artifacts, reproducible execution, and scaling to cloud compute transparently. The step decorator pattern feels natural in Python notebooks and scripts. The AWS integration (Batch, Step Functions) is especially tight.

Best for: Data science-heavy teams on AWS who want reproducible, scalable workflows without heavy Kubernetes expertise.

💰 Pricing: Free (open source)

Apache Airflow is the workhorse of workflow orchestration. It predates the modern MLOps era but remains enormously relevant because virtually every data engineering team already runs it — and ML pipelines often need to interoperate with data pipelines. Its DAG-based workflow model, massive operator ecosystem, and managed offerings make it a pragmatic choice. Airflow wasn’t designed for ML specifically, and it shows: artifact versioning, experiment tracking, and model serving are outside its scope.

Best for: Teams already running Airflow for data pipelines who need ML steps integrated without adopting a new orchestration stack.

💰 Pricing: Free (open source) | Managed offerings have separate pricing

Neptune AI built a genuinely strong experiment metadata management platform — particularly for teams that needed flexible, queryable experiment metadata beyond what MLflow or W&B offered. The metadata store concept (logging arbitrary structured data to runs) was innovative and influenced how later tools approached logging flexibility.

Best for: Teams actively migrating off Neptune to other platforms.

💰 Pricing: Shutting down — do not start new projects on Neptune.

Full Comparison Table

| Tool | Price | Open Source | Best For |

|---|---|---|---|

| Weights & Biases | $50+/user | No | Research teams, best UI |

| MLflow | Free | Yes | Cloud ML platforms |

| ClearML | Free / $15 | Yes | Full stack, data control |

| Comet ML | Custom | No | LLM eval, prod monitoring |

| Kubeflow | Free | Yes | Kubernetes-native teams |

| DVC | Free | Yes | Dataset versioning |

| ZenML | Free/Paid | Yes | Infra-agnostic pipelines |

| Metaflow | Free | Yes | Data scientists on AWS |

| Airflow | Free | Yes | Data + ML pipelines |

| Neptune AI | Shutting down | No | Migrate immediately |

How to Choose: Decision Framework by Team Stage

🌱 Solo / Early Stage

Start with MLflow — free, zero lock-in, runs locally. Add DVC if your datasets are large. Upgrade to ClearML Free when you want pipelines. Avoid W&B until team grows.

👥 Small Team (2-10)

ClearML Pro at $15/user beats W&B on price and features. Add DVC for data versioning. Use ZenML if you need pipeline portability across infrastructure.

🏢 Mid-Size (10-50)

W&B Teams if collaboration and UI matter most to stakeholders. Kubeflow if you have dedicated MLOps engineers and k8s infrastructure. Comet ML if deploying LLMs needs production monitoring.

🏗️ Enterprise (50+)

Managed Kubeflow (Vertex AI, SageMaker) for scale. ClearML Enterprise for self-hosted with SSO and SLA. Airflow + ZenML for hybrid data + ML orchestration.