LLMOps

for Beginners

A Complete Guide to Deploying

& Monitoring LLMs in Production

Everything you need to ship, observe, and optimize large language model applications — from prompt versioning to cost control.

What is LLMOps and Why It Matters in 2026

LLMOps (Large Language Model Operations) is the set of practices, tools, and workflows used to deploy, monitor, and maintain LLM-powered applications in production. Think of it as DevOps — but purpose-built for the era of generative AI.

As of 2026, LLMs have moved from research curiosities to mission-critical infrastructure. Teams are running millions of API calls per day, managing complex RAG pipelines, and serving customers in real time. Without proper operations practices, costs spiral, quality degrades silently, and debugging becomes nearly impossible.

LLMOps is not just about infrastructure. It’s about maintaining quality, reliability, and cost-efficiency as your prompt evolves, your data changes, and your user base grows. In 2026, having LLMOps in place is the difference between an AI product and an AI experiment.

At its core, LLMOps covers: prompt lifecycle management, evaluation and testing, observability and tracing, cost optimization, and safety guardrails. We’ll cover all of these in this guide.

LLMOps vs MLOps: Key Differences

If you have a background in traditional machine learning, you might wonder: why not just use MLOps? The short answer is that LLMs introduce entirely new operational concerns that classic ML pipelines never had to handle.

Many teams apply MLOps tooling to LLM workloads and wonder why it doesn’t work. The fundamental difference: in classical ML, the “intelligence” lives in model weights. In LLMs, a huge portion of behavior is encoded in your prompts, context, and retrieval logic — and those change far more frequently than a trained model.

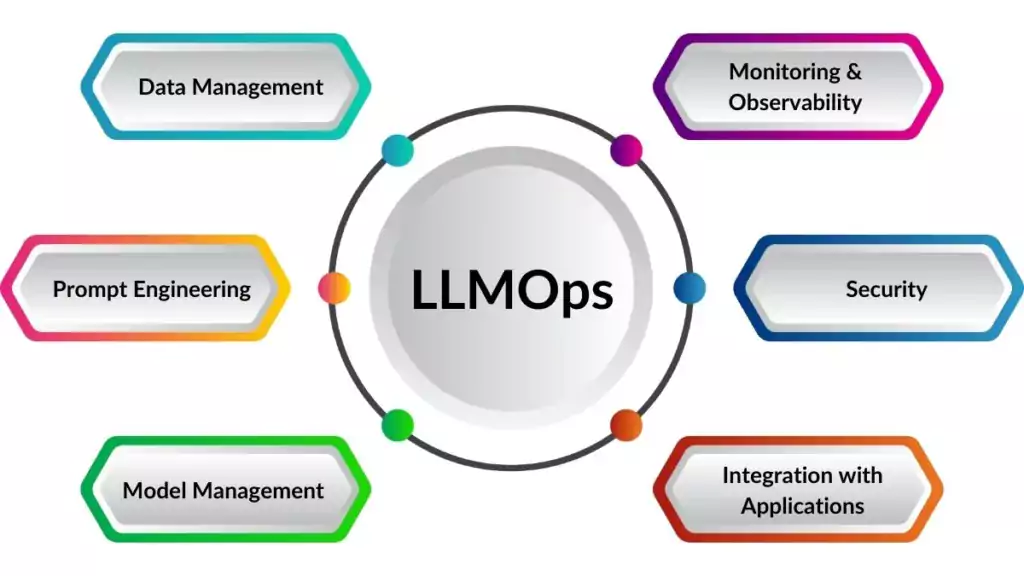

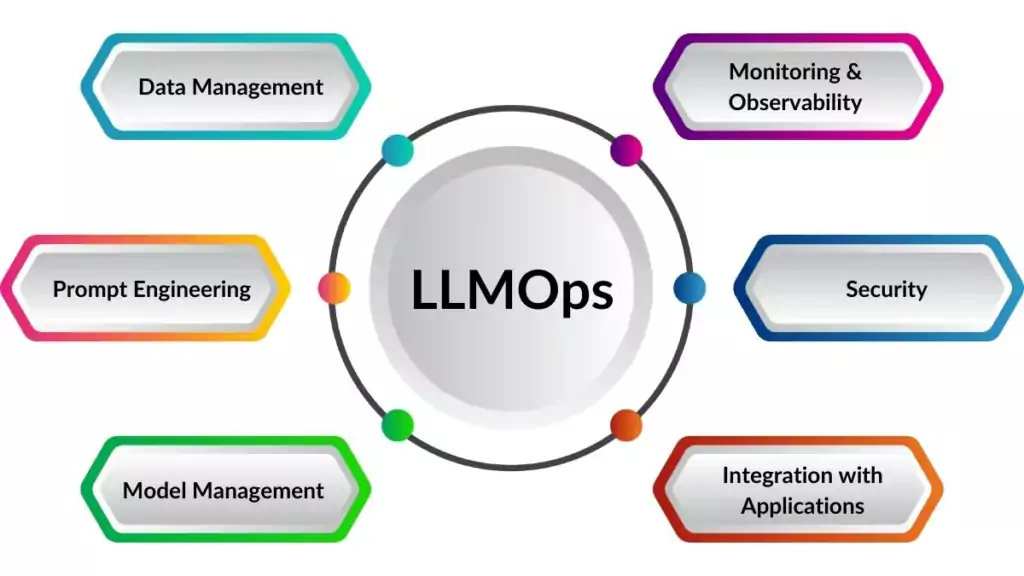

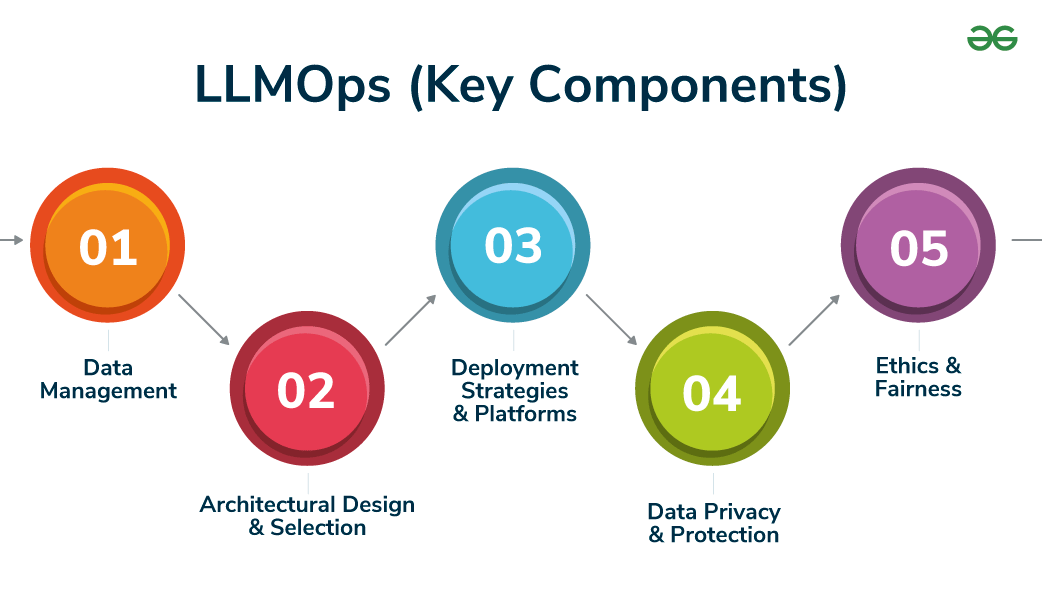

Core Components of an LLMOps Stack

A production-ready LLMOps system in 2026 typically consists of six core pillars. Each addresses a specific failure mode of LLM applications at scale.

Prompt Management

Version-control your prompts like code. Track changes, run A/B tests, and roll back when a new prompt hurts quality.

RAG Evaluation

Measure retrieval quality: Are you fetching the right context? Is the LLM using it faithfully?

LLM Observability

Full-stack tracing of every LLM call, chain step, and tool invocation. See latency, token usage, and outputs in a single timeline view.

Guardrails

Automated safety checks on inputs and outputs: block jailbreaks, redact PII, detect hallucinations.

Cost Tracking

Per-request token accounting. Attribute costs to users, features, or tenants. Set budget alerts.

Feedback Loops

Collect human ratings and implicit signals and feed them back into prompt improvement cycles.

→

→

↓

←

→

↓

→

→

Essential Tools Overview

The LLMOps tooling ecosystem has matured rapidly. Here are the leading platforms you’ll encounter in 2026, and what each one is best at.

Built by the LangChain team. Best-in-class tracing for LangChain and LangGraph apps.

Open-source framework for evaluating RAG pipelines. Measures faithfulness, answer relevance.

Drop-in proxy for any OpenAI/Anthropic call. Logs every request, tracks token costs.

End-to-end LLM evaluation platform with experiment tracking and scoring functions.

Starting out? Use Helicone for instant cost visibility (5 min setup), LangSmith for tracing your chains, and Ragas to evaluate your RAG retrieval. That covers 80% of your LLMOps needs with minimal overhead.

Step-by-Step: Build a RAG Pipeline with Tracing

Let’s build a minimal but production-instrumented RAG pipeline. We’ll use LangChain for orchestration, Chroma as our vector store, and LangSmith for tracing every step of the pipeline.

Set Up Your Environment

Install dependencies and configure your API keys. We’ll need LangChain, an OpenAI key, and a LangSmith account (free tier available).

Initialize the Vector Store

Load your documents, chunk them into pieces, embed them, and store in Chroma. Every step will be traced automatically by LangSmith.

Build the RAG Chain

Wire together retriever → prompt → LLM → output parser into a LangChain LCEL chain. LangSmith will capture the full run tree.

Add Ragas Evaluation

After each run, evaluate faithfulness and answer relevancy. Store results to track quality over time as your prompts evolve.

Deploy & Monitor

Ship to production. Set up LangSmith alerts for latency spikes and quality drops. Route feedback from users back into your eval dataset.

Cost Management: Track, Optimize, Control

Token costs are the silent killer of LLM startups. A feature that costs $10/day in dev can cost $3,000/month in production. Here’s a systematic approach to keeping your bill in check.

| Strategy | Description | Savings |

|---|---|---|

| Prompt compression | Reduce system prompt length. Remove redundant instructions. | 15–40% |

| Model routing | Route simple queries to smaller/cheaper models. | 50–80% |

| Semantic caching | Cache LLM responses for similar queries. | 20–60% |

| Context window tuning | Reduce retrieved chunks, trim conversation history. | 20–35% |

| Batch API | Use async batch endpoints for non-real-time tasks. | 50% |

Classify incoming queries by complexity. Route simple factual questions (70% of traffic) to a cheap small model. Route reasoning tasks (25%) to a mid-tier model. Reserve your flagship model for the genuinely hard cases (5%). This alone can cut your monthly bill by 60–70% with no quality loss.