Model Drift Detection:

Stop Silent Failures Before They Kill Your Model

Your model shipped. Now it’s slowly dying — and you don’t know it. Learn to detect data drift, concept drift, and prediction drift using Evidently AI, FastAPI, and Python before the damage is done.

FastAPI

Python 3.12+

PSI · KS Test

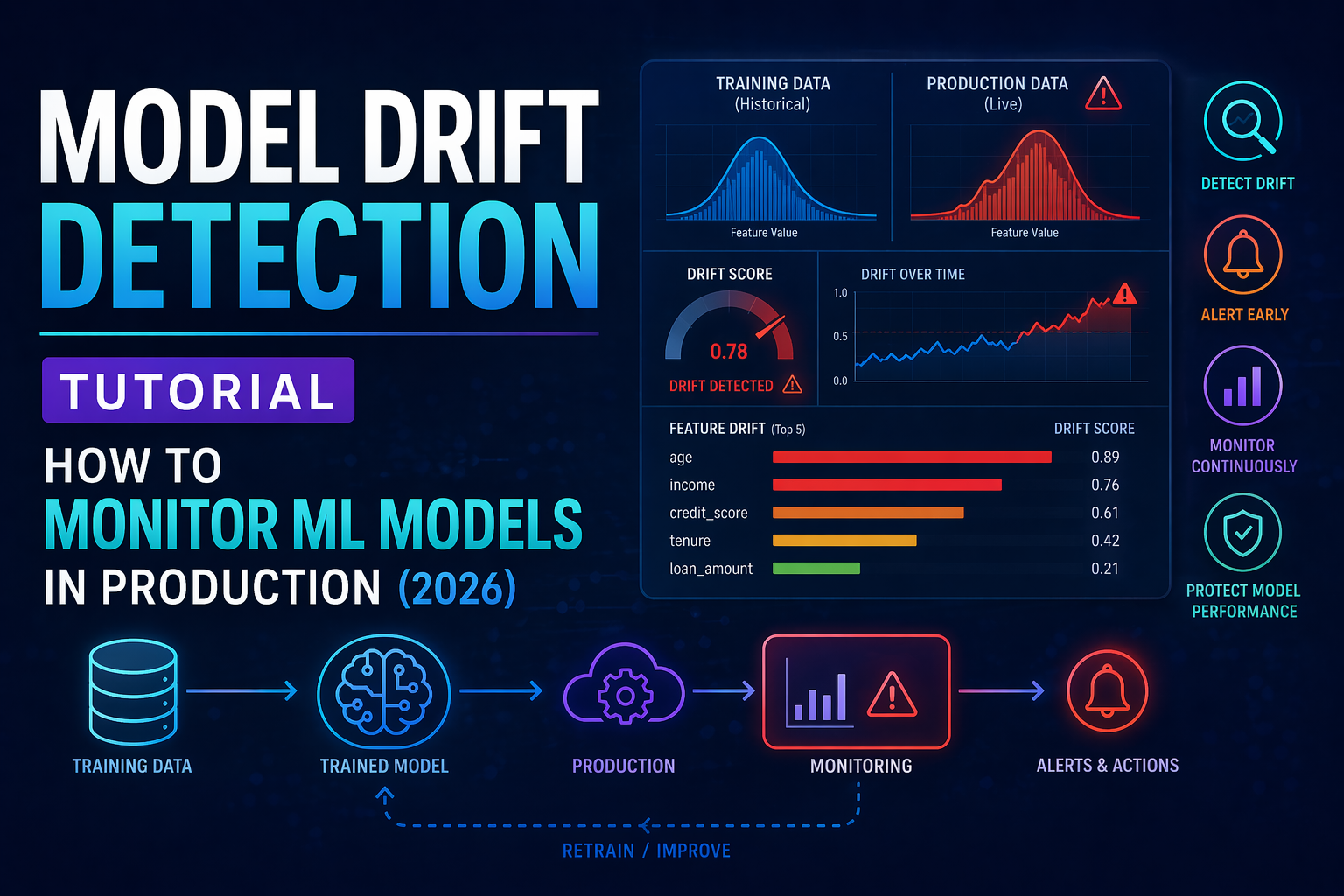

Model drift detection is the practice of monitoring machine learning models in production to identify when they start degrading due to changes in real-world data. Without proper detection, the model that worked perfectly at deployment starts making worse predictions — often silently, without any errors or alerts.

This is the #1 reason ML projects fail in production. By the time you notice the problem, you’ve already lost revenue, damaged user trust, or made critical bad decisions. Implementing drift detection is the only way to catch these issues early.

The Silent Killer: Most teams don’t monitor drift. They only notice when a stakeholder complains. By then, the model has been wrong for weeks — sometimes months. Model drift detection would have flagged this on day one.

Three Types of Drift You Must Monitor

Real-World Examples

- Data DriftA fraud detection model trained on 2024 transaction patterns encounters completely different spending behavior in 2026. Accuracy silently collapses.

- Concept DriftA house price model trained pre-COVID fails badly after remote work permanently changes housing demand dynamics.

- Prediction DriftA recommendation model starts surfacing entirely different categories as user behavior shifts post-product-change.

The hard truth: If you’re not implementing drift detection, you’re flying blind. Your model is degrading right now — you just don’t know it.

Why Model Drift Detection Matters

Bad recommendations, wrong pricing, failed fraud detection — all translate directly to lost money. Users notice when your model is wrong before you do. Regulated industries (finance, healthcare) require model monitoring by law. Without drift detection, you waste days debugging “why did the model get worse?” with zero data to guide you.

Business case: One drift detection system can save months of engineering time and prevent millions in revenue loss. The ROI is not even close.

Statistical Methods for Drift Detection

PSI

Population Stability Index — measures distribution shift between two samples.

PSI > 0.25 → retrain

KS Test

Kolmogorov-Smirnov test. Low p-value signals drift.

Distribution Plots

Plot feature distributions over time. Look for shifts.

Quick rule of thumb: If any feature’s distribution changes by more than 15–20%, investigate immediately. Don’t wait for accuracy to drop. That’s the power of drift detection.

Tools for Model Drift Detection

Open Source

Python library that generates drift reports, data quality dashboards, and model performance metrics. Free, self-hosted.

SaaS

Managed platform with a free tier. Monitors drift, data quality, and performance out of the box.

Infrastructure

Self-hosted monitoring stack. Track drift metrics as time-series. Alert when drift scores cross thresholds.

Open Source

Track drift scores as metrics alongside experiments. Trigger external alerts when scores exceed thresholds.

Step-by-Step Tutorial

Pro tip on frequency: Run drift detection daily for revenue-critical models, weekly for others. The cost of a single missed drift event vastly outweighs the cost of running checks regularly.

When Drift Is Detected — What To Do

Open the Evidently report and look at which specific features are flagged. Sort by drift score descending.

Is it seasonal? A data pipeline bug? A real-world behavioral shift? Drift detection tells you what, not why.

If drift is real and significant, retrain on newer labeled data. Don’t retrain blindly — confirm you have sufficient new data first.

Update your alert thresholds based on what you learned. Some drift may be acceptable for your use case.

Add it to your model’s changelog. Include what drifted, why, and how you fixed it.

Retraining strategy: Don’t retrain reflexively. Only retrain when drift is confirmed AND you have sufficient new labeled data. Retraining on insufficient data can make things worse.

Continue Learning About MLOps

Kubeflow vs Airflow for ML

Orchestrate your drift-triggered retraining pipeline at scale.

MLflow Tutorial

Track ML experiments in 20 minutes.

MLOps Roadmap 2026

How to become an ML engineer step by step.

ML Pipeline Tutorial

Build the production pipeline that needs monitoring.

MLOps Engineer · Updated April 2026 · Built with Evidently AI, FastAPI & Python

mlopslab.org · Model Drift Detection Tutorial · 2026 · Evidently AI · FastAPI · Python