You trained a model. It hits 94% accuracy on your test set. You’re excited. Then someone asks the question that stops every new ML engineer cold:

“How do we actually use it?”

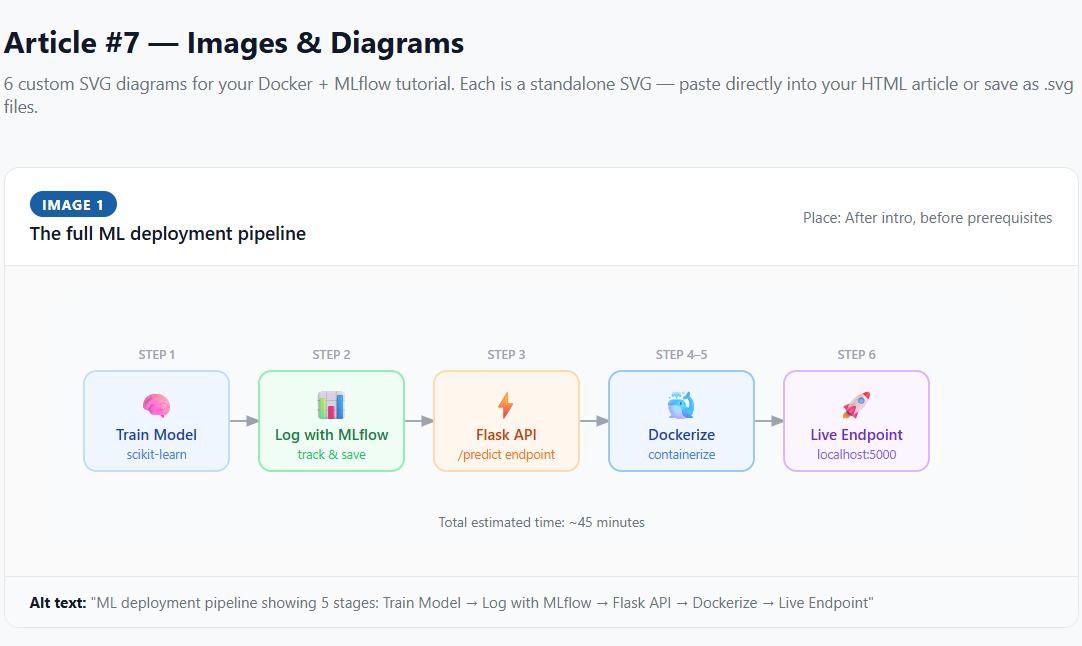

Training a model is step one. Deploying it — making it available to other systems, APIs, or end users — is an entirely different skill. Without a repeatable deployment process, your model lives forever in a Jupyter notebook that only runs on your laptop.

This tutorial fixes that. You’ll use MLflow to track and package your model, Flask to serve predictions over HTTP, and Docker to containerize everything so it runs identically on any machine, any cloud, any environment.

About 45 minutes if you follow along step by step.

Prerequisites

- 🐍 Python 3.8+ — check with

python --version - 🐳 Docker Desktop — free at docker.com

- 🧠 Basic ML knowledge — you know what training a model means

- 📦 Python packages — run

pip install mlflow scikit-learn flask

Open Docker Desktop and wait for it to show “Running”. The most common beginner mistake is running Docker commands while the daemon is still sleeping.

Project structure

ml-deploy/

├── train.py # Step 1 — train & log model

├── app.py # Step 3 — Flask API

├── Dockerfile # Step 4 — container config

├── requirements.txt # Step 4 — Python dependencies

└── mlruns/ # Auto-created by MLflowTrain a model and log it with MLflow

~10 minCreate a file called train.py and paste in this code:

import mlflow

import mlflow.sklearn

from sklearn.datasets import load_iris

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score

# Name your experiment — MLflow creates it if it doesn't exist

mlflow.set_experiment("iris-deployment")

with mlflow.start_run():

# 1. Load and split data

X, y = load_iris(return_X_y=True)

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

# 2. Train model

model = RandomForestClassifier(n_estimators=100, random_state=42)

model.fit(X_train, y_train)

# 3. Evaluate

y_pred = model.predict(X_test)

accuracy = accuracy_score(y_test, y_pred)

# 4. Log parameters and metrics to MLflow

mlflow.log_param("n_estimators", 100)

mlflow.log_param("random_state", 42)

mlflow.log_metric("accuracy", accuracy)

# 5. Save model — this is the key step for deployment

mlflow.sklearn.log_model(model, "model")

print(f"Model accuracy: {accuracy:.4f}")

print(f"Run ID: {mlflow.active_run().info.run_id}")

Figure 1: MLflow UI showing experiment tracking dashboard with logged parameters and metrics

Run it with: python train.py

Figure 2: Running the training script — note the Run ID printed at the end

You’ll see output like: “Model accuracy: 0.9667” and a Run ID. Copy that Run ID — you’ll need it in the next step.

Run mlflow ui in your project folder and open http://localhost:5000 in your browser. You’ll see every logged parameter, metric, and artifact — including the saved model — in a clean dashboard.

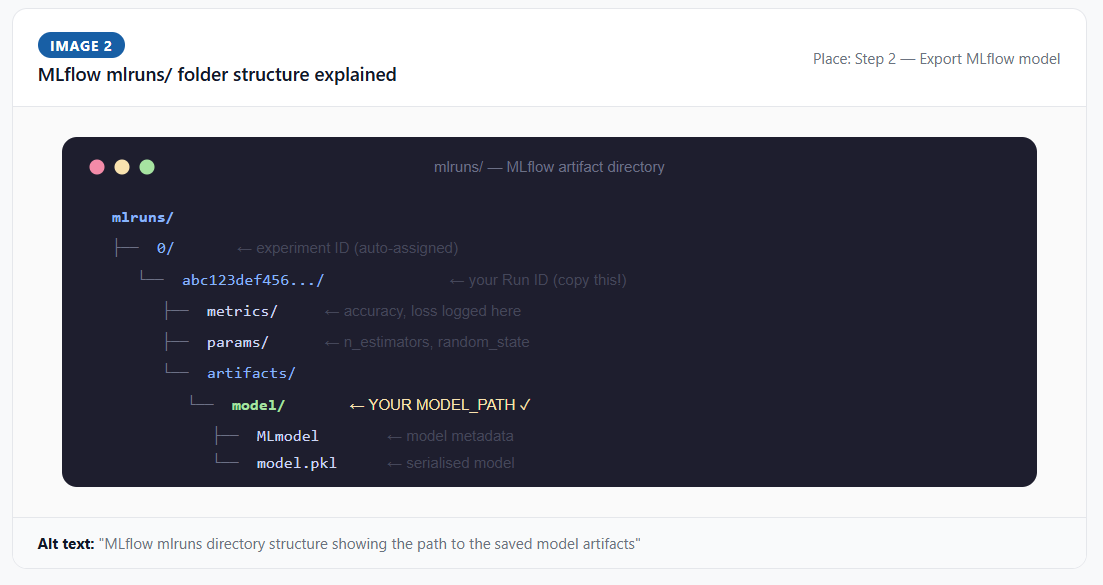

Find your MLflow model path

~5 minRun this command to print the exact path automatically:

find mlruns -name "MLmodel" -print

Figure 3: The mlruns directory structure — your model is saved in the artifacts/model folder

The output will look like: mlruns/0/abc123def456/artifacts/model/MLmodel

Your MODEL_PATH is everything up to (and including) model — for example: mlruns/0/abc123def456/artifacts/model

MLflow’s pyfunc loader needs to find the MLmodel file to know how to reconstruct your model object. An incorrect path is the #1 cause of “model not found” errors at deployment time.

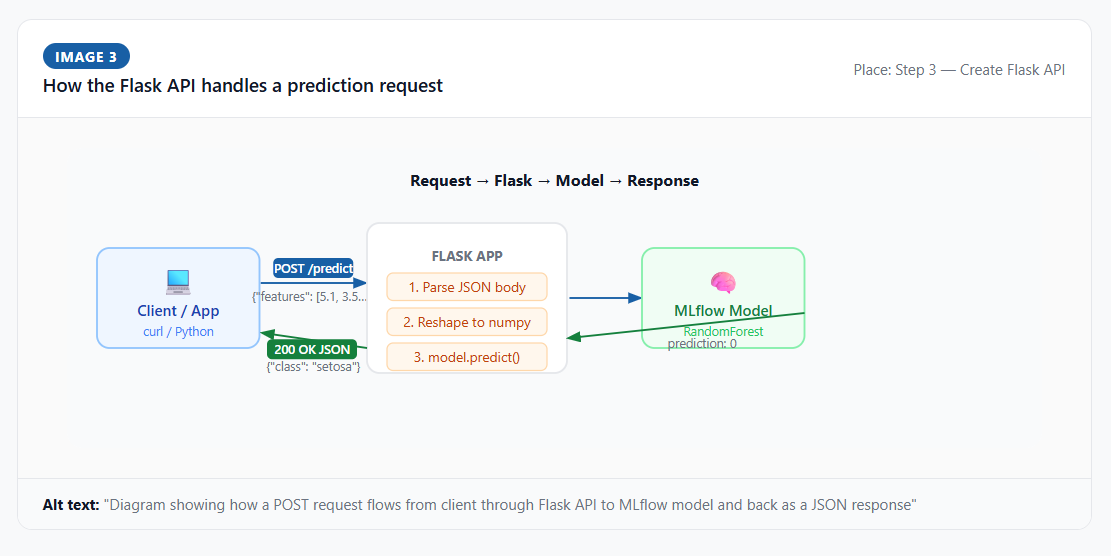

Create a Flask API

~15 minNow we wrap the model in a web server. Create app.py:

import os

import numpy as np

from flask import Flask, request, jsonify

import mlflow.pyfunc

app = Flask(__name__)

# REPLACE the path below with YOUR actual MODEL_PATH from Step 2

MODEL_PATH = "mlruns/0/YOUR_RUN_ID_HERE/artifacts/model"

model = mlflow.pyfunc.load_model(MODEL_PATH)

print(f"Model loaded from: {MODEL_PATH}")

@app.route("/health", methods=["GET"])

def health():

return jsonify({"status": "healthy", "model_loaded": True})

@app.route("/predict", methods=["POST"])

def predict():

try:

data = request.get_json(force=True)

if "features" not in data:

return jsonify({"error": "Missing 'features' key in request body"}), 400

features = np.array(data["features"]).reshape(1, -1)

prediction = model.predict(features)

class_names = ["setosa", "versicolor", "virginica"]

pred_int = int(prediction[0])

return jsonify({

"prediction": pred_int,

"class_name": class_names[pred_int]

})

except Exception as e:

return jsonify({"error": str(e)}), 500

if __name__ == "__main__":

app.run(host="0.0.0.0", port=5000, debug=False)Create requirements.txt:

flask==3.0.0

mlflow==2.11.0

scikit-learn==1.4.0

numpy==1.26.4

gunicorn==21.2.0

Figure 4: Flask API server successfully running on port 5000

Always pin exact package versions in requirements.txt. “It works on my machine” almost always comes down to package version drift. Pinned versions mean your container is reproducible forever.

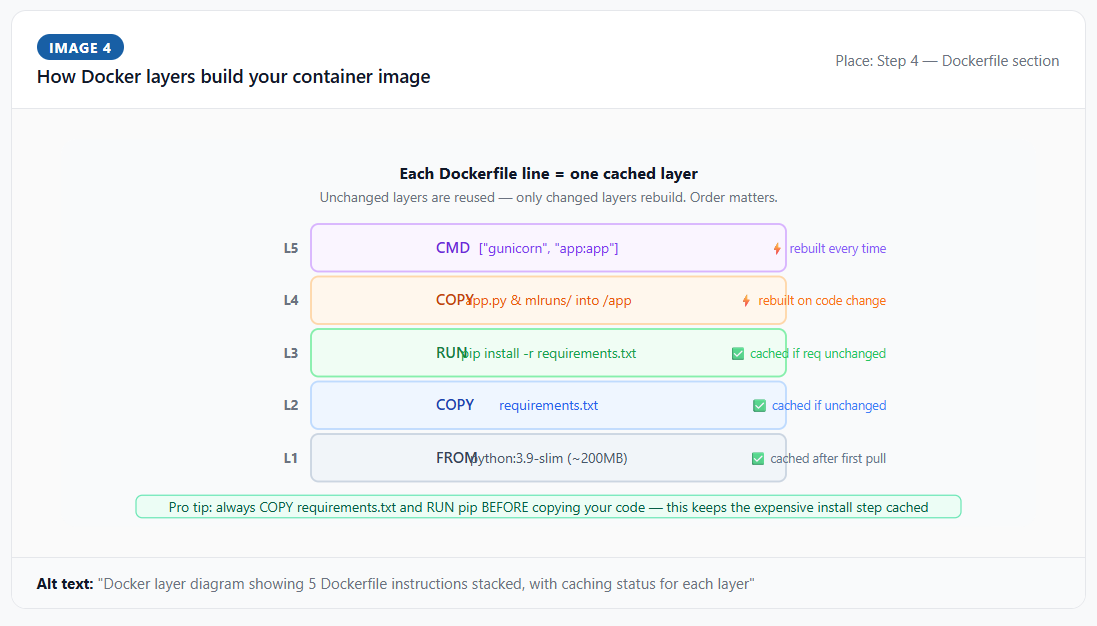

Write the Dockerfile

~10 minCreate a file named exactly Dockerfile (no extension):

# Base image — python:3.9-slim gives us Python without the full OS bloat

FROM python:3.9-slim

# Working directory — all subsequent commands run from /app inside the container

WORKDIR /app

# Install dependencies BEFORE copying source code (Docker caches this layer)

COPY requirements.txt .

RUN pip install --no-cache-dir -r requirements.txt

# Copy application code

COPY app.py .

# Copy the trained model (REPLACE the path with YOUR actual run ID)

COPY mlruns/0/YOUR_RUN_ID_HERE/artifacts/model ./model

# Tell Docker which port our app listens on

EXPOSE 5000

# Set environment variable for model path

ENV MODEL_PATH=./model

# Start the server using gunicorn (production-grade WSGI server)

CMD ["gunicorn", "--bind", "0.0.0.0:5000", "--workers", "2", "app:app"]Flask’s built-in server is single-threaded and not designed for production traffic. Gunicorn is a production-grade WSGI server that handles multiple concurrent requests. Always use gunicorn (or uvicorn for async apps) in production containers.

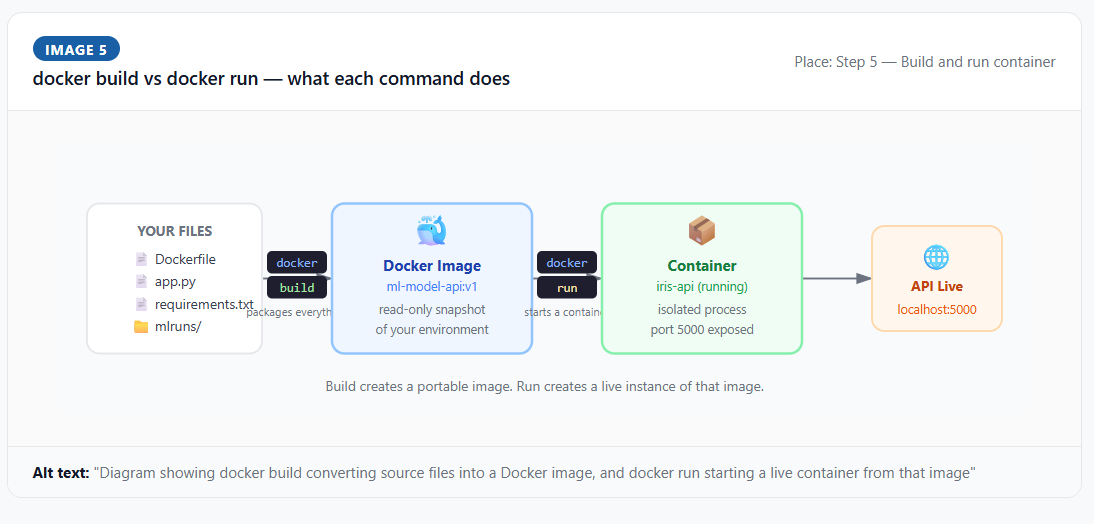

Build and run the container

~10 minMake sure Docker Desktop is open and showing “running” before proceeding.

# Build the image (-t gives your image a name and tag)

docker build -t ml-model-api:v1 .

# Run the container (-p maps host port to container port, -d runs in background)

docker run -p 5000:5000 --name iris-api -d ml-model-api:v1

# Verify it's running

docker ps

# Check the logs

docker logs iris-api

Figure 5: Docker build successfully completed — image tagged as ml-model-api:v1

docker stop iris-api — stop the container | docker start iris-api — restart it | docker rm iris-api — delete it | docker images — list all images

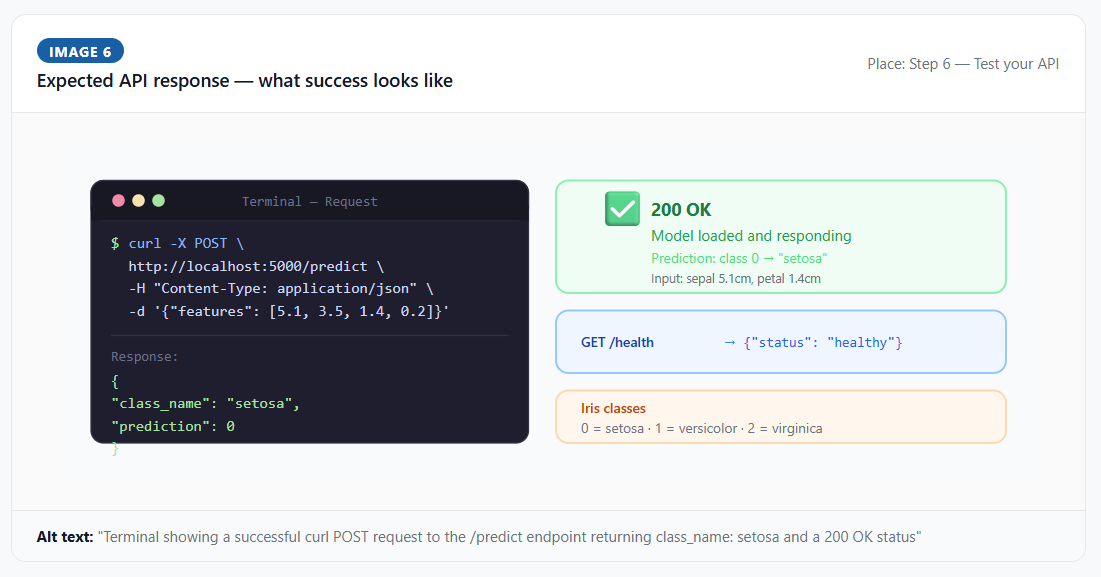

Test your API

~5 minYour model is now running inside a Docker container and listening on port 5000. Test it with curl:

# Health check first

curl http://localhost:5000/health

# Make a prediction — these features are typical of Iris setosa

curl -X POST http://localhost:5000/predict \

-H "Content-Type: application/json" \

-d '{"features": [5.1, 3.5, 1.4, 0.2]}'

Figure 6: Successful prediction response — the model returns class 0 (setosa)

Expected response: {"prediction": 0, "class_name": "setosa"}

Troubleshooting common errors

What to do next

You now have a working, containerized ML API. Here’s how to level it up from a tutorial project into production-ready infrastructure:

Related articles

Now that you’ve deployed a model with MLflow, these articles cover the tools that fit into the same workflow:

- MLflow vs Weights & Biases — full comparison (2026)

- MLflow vs ClearML — which open source MLOps tool wins?

- 7 best Weights & Biases alternatives (free & paid) for 2026

- Neptune AI alternatives — migration guide after the shutdown

You built a deployable ML API — now take it further

You’ve gone from a trained model to a live Docker container serving real predictions over HTTP. That’s the core skill that separates ML engineers who “train models” from engineers who “ship ML products.”

The next step: pick a cloud provider and deploy this container publicly. Google Cloud Run is free for low traffic and deploys a Docker container in under five minutes.

Written by Ayub Shah — ML engineering student testing MLOps tools so you don’t have to.

Tags: deploy machine learning model docker · mlflow docker tutorial · flask ml api tutorial · machine learning deployment tutorial 2026